How to Reduce URL Confusion and Protect Search Visibility

Duplicate content SEO is one of the most misunderstood areas of technical optimization. Many site owners hear the term and assume it means an automatic penalty. Others ignore it completely because they have been told search engines can “figure it out.”

The more practical truth sits between those extremes. Duplicate content usually does not lead to a manual penalty on its own, but it can create serious SEO inefficiencies. It can split ranking signals, confuse search engines about which page to index, waste crawl attention, and weaken the visibility of pages that should be central.

That is why this topic matters in a pillar-and-cluster content model. A website building topical authority needs clear page roles, clean internal signals, and a structure that tells search engines which URL should represent each topic. Duplicate content gets in the way of that clarity.

This cluster page explains duplicate content SEO in practical terms: what duplicate content is, why it matters, how it happens, what to do about it, and how to approach it strategically.

What Is Duplicate Content SEO?

Duplicate content SEO refers to the management of identical or very similar content that appears on multiple URLs, either within the same website or across different websites.

In practical terms, duplicate content becomes an SEO issue when search engines encounter multiple versions of substantially the same page and have to decide which one should be crawled, indexed, and ranked.

That situation is more common than many teams realize. Duplicate content does not only come from copying and pasting articles. It often comes from technical patterns such as:

- parameter-based URLs

- filtered category pages

- HTTP and HTTPS duplication

- trailing slash inconsistencies

- print versions

- tracking URLs

- CMS-generated archives

- product variants

- syndicated content

Duplicate content is usually a consolidation problem

The core issue is not that search engines “hate duplicates.” The issue is that they need to choose one version as primary. If your site does not make that choice clear, signals can be diluted across multiple URLs.

That is why duplicate content SEO is closely tied to canonical tag strategy, URL structure SEO, XML sitemap SEO, and crawling and indexing.

Why Duplicate Content Matters

Duplicate content matters because search visibility depends on clarity. Search engines want to understand which page should represent a topic. When several URLs look interchangeable, that decision becomes less efficient.

It can split ranking signals

If links, internal references, and crawl activity are spread across multiple versions of similar content, the preferred page may not receive the full benefit of those signals. This is one of the most practical reasons duplicate content matters.

It can confuse indexation

Search engines may crawl several duplicate-like pages but index only one of them. If the wrong version is selected, your preferred URL may struggle to rank even when the content itself is strong.

This is why duplicate content SEO connects directly to pages about indexation control and canonical tags.

It can waste crawl resources

On larger sites, duplication can create unnecessary crawl load. Search engines may spend time processing pages that add little unique value instead of focusing on the URLs that actually matter.

A natural related article here would be a cluster page on crawlability and crawl efficiency.

It weakens topical structure

For websites building topic clusters, duplication makes the site architecture less clear. Instead of one strong page owning a subject, several overlapping pages may compete with one another.

How Duplicate Content SEO Works

Duplicate content SEO is mostly about helping search engines consolidate similar URLs around the right page.

Search engines do not evaluate duplication in a simplistic all-or-nothing way. They assess how similar pages are, how the site links to them, which canonical signals are present, which version appears in sitemaps, and which page seems most useful as the representative version.

Search engines choose a representative URL

When several pages are similar, search engines often choose one as the canonical or representative result, even if you do not specify one. That is where problems begin: the chosen version may not be the one you want.

Technical signals influence the decision

The main signals that shape this include:

- canonical tags

- redirects

- internal links

- sitemap inclusion

- preferred URL structure

- indexation directives

When these signals align, search engines are more likely to select the intended version.

Similarity matters

Not every similar page is a duplicate. Some pages overlap but still serve distinct intent. For example, closely related category pages or product variation pages may deserve separate visibility if they target different searches.

That is why duplicate content SEO requires judgment, not blanket rules.

Common Causes of Duplicate Content

Most duplicate content problems are structural, not editorial.

URL Variations

A page may be accessible through multiple URLs because of parameters, trailing slash differences, case variations, or inconsistent protocol handling. These are classic technical duplication issues.

This is where URL structure SEO and canonical tag implementation become especially important.

CMS and Template Behavior

Many content management systems generate duplicate-like pages through archives, tags, attachment URLs, filtered results, or alternate category paths. Left unmanaged, these can clutter the index.

Ecommerce Filters and Product Variants

Large catalog sites often generate thousands of URLs through sort options, color filters, size variations, and session parameters. Some of these pages are useful. Many are not. The SEO challenge is deciding which deserve independent visibility and which should consolidate.

Reused or Syndicated Content

Duplicate content can also occur across domains when the same article, product description, or resource is republished elsewhere. This does not always create a problem, but it can if the original source is not clearly positioned as primary.

Important Subtopics in Duplicate Content SEO

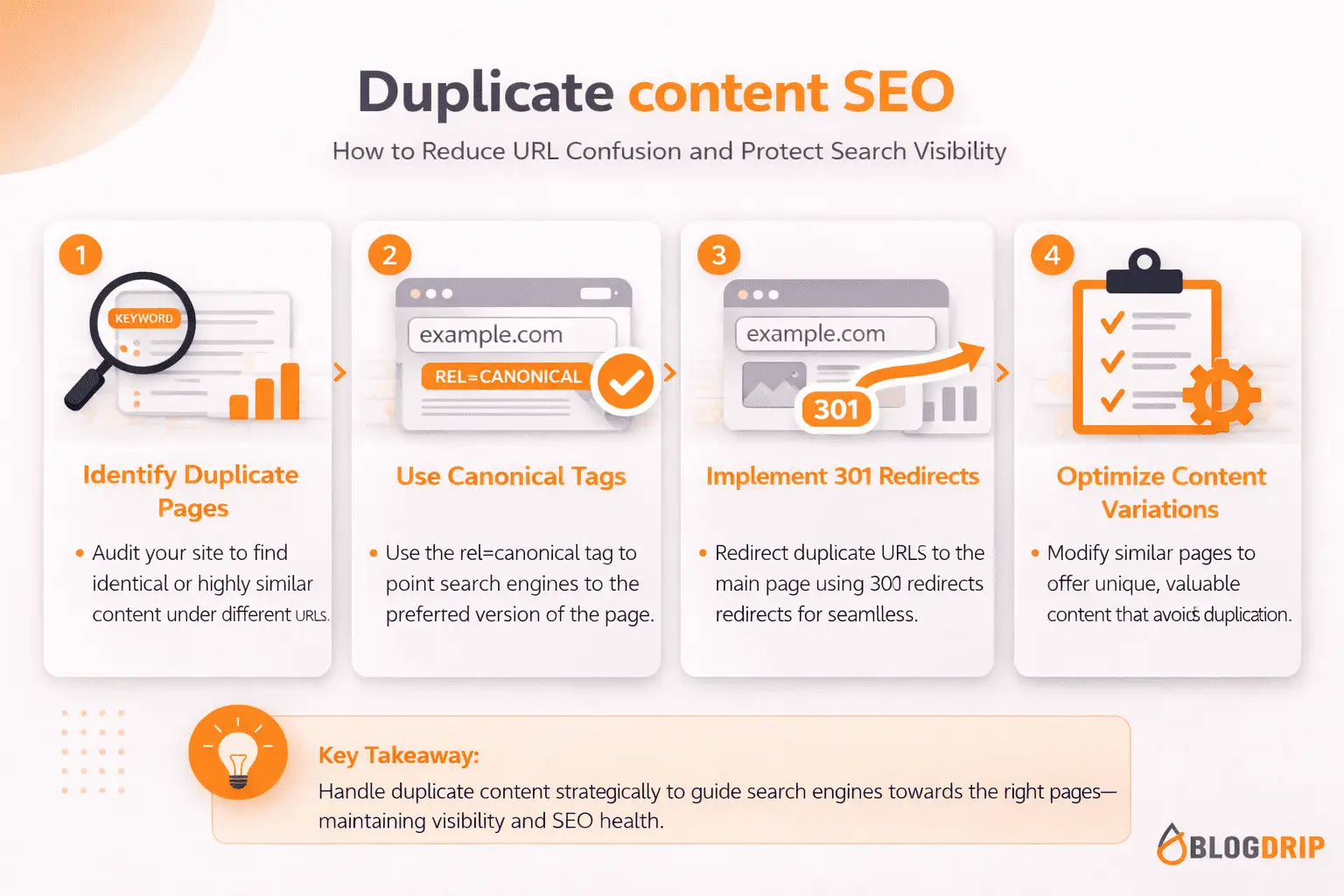

Canonical Tags

A canonical tag helps signal which version of a page should be treated as primary when similar URLs exist. It is one of the most important tools for duplicate content SEO, but it works best when supported by internal linking and sitemap consistency.

Redirects

If an old page has truly been replaced, a redirect is often better than a canonical tag. Redirects are stronger when only one live version should remain accessible.

Internal Linking

Internal links should point to the preferred version of a page. If your site frequently links to non-canonical or duplicate versions, you send mixed signals.

XML Sitemaps

Your sitemap should generally list preferred canonical URLs, not duplicates. A messy sitemap can reinforce the wrong version and reduce technical clarity.

Common Mistakes

One common mistake is assuming duplicate content always means a penalty. In most cases, the real issue is not punishment but confusion and inefficiency.

Another mistake is using canonical tags too aggressively. Pages that serve different intent should not automatically canonicalize to one another just because they are related.

Some teams also rely on canonical tags when a redirect would be more appropriate. If a page is obsolete and should no longer exist as a live version, canonicalization is usually not the cleanest fix.

It is also common to ignore internal duplication created by the CMS. Sites may spend time worrying about copied paragraphs while overlooking parameter-based URLs, filtered archives, or alternate category paths that create much larger duplication issues.

Practical Guidance

The best approach to duplicate content SEO is to start by identifying whether the issue is real duplication, near-duplication, or intentional overlap.

Then ask a simple question: should these URLs all exist as separate indexable pages?

If the answer is no, choose the right method to consolidate signals:

- use redirects when old or unnecessary versions should disappear

- use canonical tags when multiple live versions need one preferred page

- use noindex carefully when pages should remain accessible but stay out of search

- clean up internal linking so it supports the preferred version

- keep XML sitemaps focused on canonical URLs

Prioritize pattern-level issues

Do not review duplication only page by page. Look for structural patterns such as:

- tracking parameters

- filtered URL combinations

- duplicate archives

- protocol inconsistencies

- case-sensitive path variations

- multiple category paths to the same content

These are usually more important than isolated content overlap.

Match the fix to the cause

A canonical tag is not always the answer. Sometimes the right fix is a redirect, a better URL structure, a content merger, or a decision to let similar pages remain separate because they target different search intent.

That is what makes duplicate content SEO strategic rather than mechanical.

Timing and Expectations

Duplicate content fixes can help relatively quickly when they resolve obvious URL conflicts and indexation confusion. Search engines may consolidate preferred versions more effectively after recrawling the affected pages.

Still, it is important to stay realistic. Fixing duplication does not create rankings by itself. It removes friction. The gains often come from consolidating value around the right page, not from adding something new.

For that reason, duplicate content work is often most effective when tied to broader technical improvements such as better canonicals, cleaner internal linking, stronger sitemap hygiene, and clearer site architecture.

Conclusion

Duplicate content SEO is ultimately about clarity. When multiple URLs carry the same or nearly the same meaning, search engines need help understanding which one should be central.

Handled well, duplicate content management protects crawl efficiency, strengthens signal consolidation, and keeps your best pages in focus. Handled poorly, it creates mixed signals that weaken topical structure and search visibility.

As a cluster page, this article should support a broader technical SEO pillar page and connect naturally to related content on canonical tags, URL structure SEO, crawling and indexing, XML sitemap SEO, and robots.txt SEO. That is the right role for duplicate content SEO in a pillar-and-cluster strategy: not as a fear-based topic about penalties, but as a practical discipline for keeping the right pages central.